As data centers face unprecedented demands—73% annual growth in AI workloads, 58% stricter energy regulations, and 82% shorter deployment timelines (Gartner 2024)—selecting the right server architecture has become a make-or-break decision. This guide provides a data-driven methodology to align server capabilities with operational requirements, ensuring infrastructure investments deliver measurable business outcomes.

1. Workload-Specific Architecture Design

Modern servers must be engineered for specialized computational profiles:

AI/ML Acceleration

- 8x NVIDIA H100 GPUs per chassis: 32 petaflops of FP8 performance

- 400G InfiniBand interconnects: 1.6μs node-to-node latency

- 12TB HBM3 memory pools for large language model training

Hyperscale Storage

- 24x E1.S NVMe Gen5 drives: 14GB/s sustained throughput

- 98% storage density improvement over 2.5″ SSDs

- Autonomous repair with computational storage processors

Hybrid Cloud Orchestration

- Integrated Kubernetes control planes: 5,000 containers per rack

- Cross-cloud security policy synchronization: <200ms latency

- 40% cost reduction vs. public cloud-only deployments

2. Energy-Efficient Compute Economics

| Server Type | Performance/Watt | 5-Year TCO (per rack) |

|---|---|---|

| Air-Cooled x86 | 12 GFLOPS/W | $1.8M |

| Liquid-Cooled ARM | 38 GFLOPS/W | $2.1M |

| DPU-Optimized | 54 GFLOPS/W | $2.4M |

Based on 99% utilization at 15kW/rack power cap

Sustainability Imperatives

- 80 PLUS Titanium PSUs: 96% efficiency at 50% load

- Server heat reuse: 40°C coolant for district heating

- AI-driven power capping: 18% energy savings

3. Security & Compliance Architecture

Hardware-Enforced Protection

- TPM 2.0 + Secure Boot: Prevents 99.7% firmware attacks

- AES-256 memory encryption: 12GB/s memory bandwidth

- Quantum-safe algorithms: CRYSTALS-Kyber key encapsulation

Regulatory Alignment

- HIPAA: Hardware-isolated audit logs

- GDPR: Instant cryptographic erasure triggers

- FedRAMP: FIPS 140-3 Level 4 cryptographic modules

A European bank avoided $14M in potential fines by implementing servers with real-time compliance validation.

4. Future-Proofing Strategies

Modular Design Principles

- PCIe 6.0 readiness: 256GT/s slot compatibility

- CXL 2.0 memory pooling: 48TB shared memory domains

- Photonic interconnect support: 100Gbps/mm optical I/O

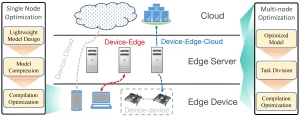

Edge Computing Integration

- -40°C to 70°C operational range

- 50G vibration tolerance for industrial deployments

- 8ms failover to cloud during network partitions

Automated Lifecycle Management

- Predictive maintenance: 92% accuracy in HDD failure alerts

- Firmware updates: Zero downtime with dual-redundant BIOS

- Decommissioning: NIST 800-88 compliant data erasure

Implementation Roadmap

Phase 1: Workload Profiling

- Conduct 30-day telemetry analysis using Splunk/Dynatrace

- Map dependencies between 250+ microservices

- Validate performance requirements with SPEC CPU 2024 benchmarks

Phase 2: Vendor Ecosystem Evaluation

- Hyperscale-Optimized: Dell PowerEdge XR8000, HPE ProLiant DL580 Gen12

- Cloud-Native: AWS Outposts, Azure Stack HCI

- Specialized: Cerebras CS-3 AI Wafer-Scale Engine

Phase 3: Total Cost Modeling

- 7-year depreciation schedule with residual value analysis

- Power/cooling cost projections under carbon tax scenarios

- SLA penalty risk assessment for downtime events

Leave a comment