Backplane Bandwidth

Backplane bandwidth, also known as switching capacity, is the maximum amount of data that can be throughput between the switch interface processor or interface card and the data bus, which is like the sum of the lanes owned by the overpass. Since all communication between ports needs to be done through the backplane, the bandwidth that the backplane can provide becomes the bottleneck when there is concurrent communication between ports.

The larger the bandwidth, the greater the available bandwidth provided to each port and the greater the data exchange speed; the smaller the bandwidth, the smaller the available bandwidth provided to each port and the slower the data exchange speed. In other words, the backplane bandwidth determines the data processing capability of the switch, and the higher the backplane bandwidth, the stronger the data processing capability. To achieve full-duplex non-blocking transmission in a network, the minimum backplane bandwidth requirement must be met.

The calculation formula is as follows

Backplane bandwidth = number of ports × port rate × 2

Tip: For Layer 3 switches, a switch is qualified only if both the forwarding rate and the backplane bandwidth meet the minimum requirements, one without the other.

For example.

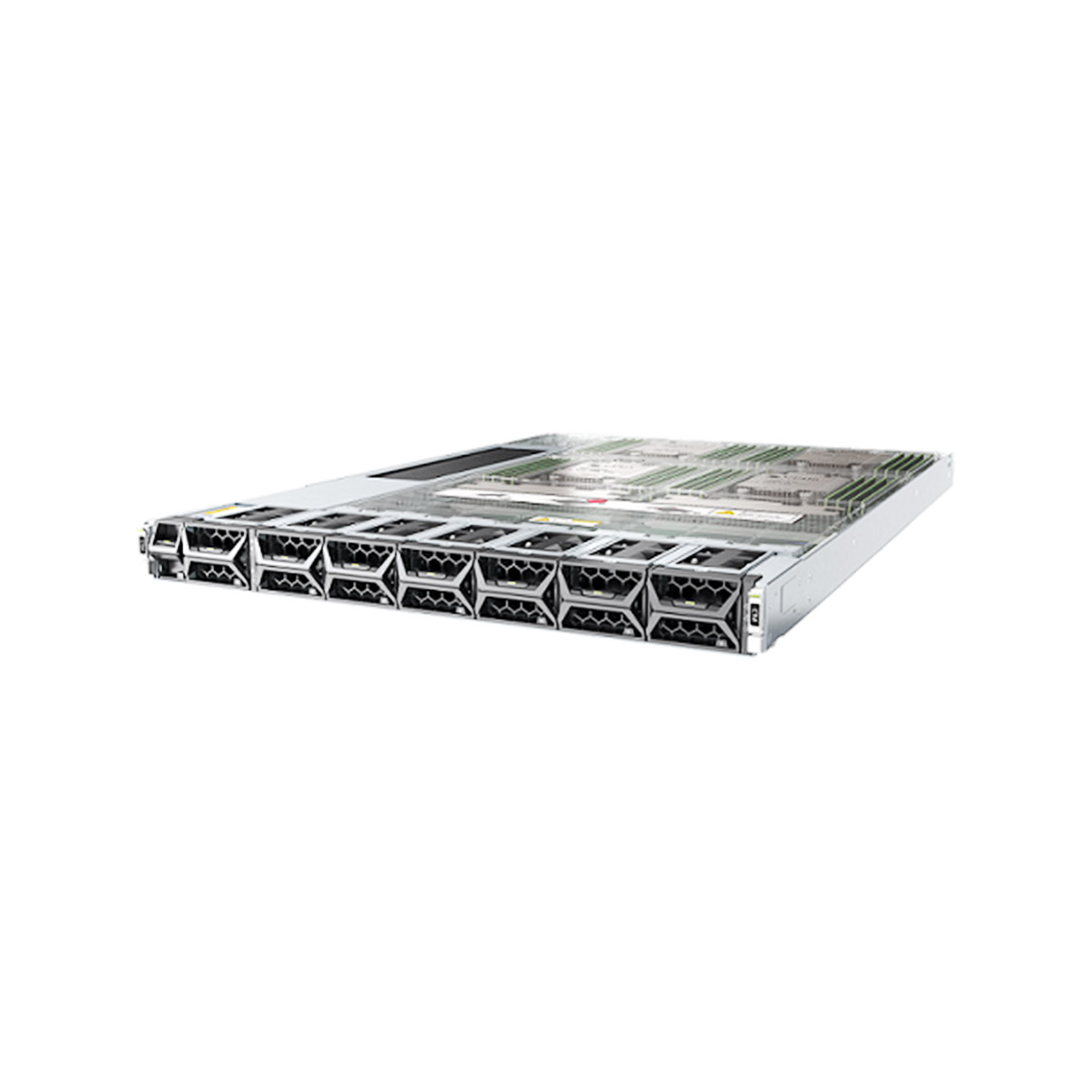

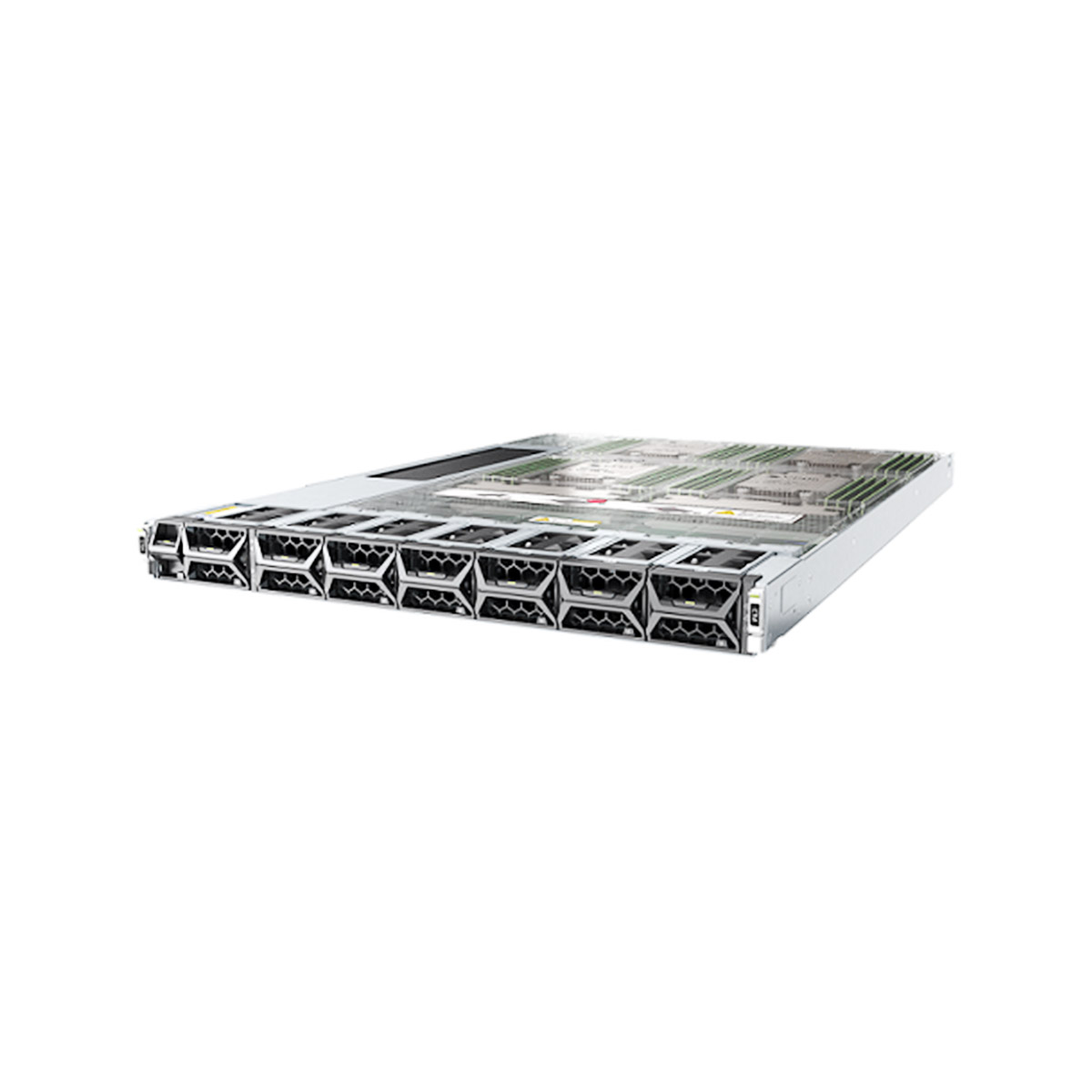

S5736-S24S4XC switch has 24 ports. Switching capacity: 448 Gbps/1.36 Tbps

S3328TP-EI-DC switch switch supports 24 10/100BASE-T ports. Switching capacity: 12.8 Gbit/s

The packet forwarding rate of Layer 2 and Layer 3

The data in the network is composed of individual packets, and the processing of each packet consumes resources. Forwarding rate (also known as throughput) is the number of packets that pass through per unit time without packet loss. Throughput is like the traffic flow of an overpass and is one of the most important parameters of a Layer 3 switch, signaling the specific performance of the switch. If the throughput is too small, it can become a network bottleneck and negatively affect the transmission efficiency of the entire network. The switch should be able to achieve wire-speed switching, where the switching rate reaches the speed of data transmission on the transmission line, thus maximizing the elimination of switching bottlenecks. For a Layer 3 core switch, if you want to achieve non-blocking transmission of the network, this rate can ≤ nominal Layer 2 packet forwarding rate and rate can ≤ nominal Layer 3 packet forwarding rate, then the switch can achieve wire speed when doing Layer 2 and Layer 3 switching.

Then the formula is as follows

Throughput (Mpps) = number of 10 Gigabit ports × 14.88 Mpps + number of Gigabit ports × 1.488 Mpps + number of 100 Gigabit ports × 0.1488 Mpps.

The calculated throughput can be wire-speed if it is less than the throughput of your switch.

This 10 Gigabit ports and 100 Gigabit ports if there is counted up, can not be counted.

For a switch with 24 Gigabit ports, its full configuration throughput should reach 24 x 1.488 Mpps = 35.71 Mpps to ensure non-blocking packet switching when all ports are working at wire speed. Similarly, if a switch can provide up to 176 gigabit ports, then its throughput should be at least 261.8 Mpps (176 x 1.488 Mpps = 261.8 Mpps) to be a true non-blocking fabric design.

So, how is 1.488 Mpps obtained?

The packet forwarding line speed is measured by the number of 64byte packets (the smallest packet) sent per unit time as the basis for calculation. For Gigabit Ethernet, the calculation is as follows: 1,000,000,000bps/8bit/(64+8+12)byte=1,488,095pps Note: When the Ethernet frame is 64byte, the fixed overhead of 8byte frame header and 12byte frame gap needs to be considered. The packet forwarding rate of a wire-speed Gigabit Ethernet port forwarding 64byte packets is 1.488 Mpps. The packet forwarding rate of a Fast Ethernet port at unified speed is exactly one-tenth of that of Gigabit Ethernet, 148.8 kpps.

For 10 Gigabit Ethernet, a wire-speed port has a packet forwarding rate of 14.88 Mpps.

For Gigabit Ethernet, a wire-speed port has a packet forwarding rate of 1.488 Mpps.

For Fast Ethernet, the packet forwarding rate of a wire-speed port is 0.1488Mpps.

So, if the above three conditions (backplane bandwidth, packet forwarding rate) are met, then we say that the core switch is truly linear and non-blocking.

Generally, a switch that meets both is a qualified switch.

Backplane relatively large, relatively small throughput of the switch, in addition to retaining the ability to upgrade the expansion is the software efficiency / special chip circuit design is problematic; backplane relatively small. The switch with relatively large throughput, the overall performance is relatively high. However, the backplane bandwidth can be trusted the manufacturer’s propaganda, but the throughput can not be trusted the manufacturer’s propaganda, because the latter is a design value, the test is very difficult and not very meaningful.

Scalability

Scalability should include two aspects.

1, the number of slots: slots for the installation of various functional modules and interface modules. Since each interface module provides a certain number of ports, the number of slots fundamentally determines the number of ports the switch can accommodate. In addition, all functional modules (such as Super Engine Module, Voice over IP Module, Extended Service Module, Network Monitor Module, Security Service Module, etc.) need to occupy a slot, so the number of slots also fundamentally determines the scalability of the switch.

2. Module type: There is no doubt that the more module types supported (such as LAN interface module, WAN interface module, ATM interface module, extended function module, etc.), the more scalable the switch is. Just take LAN interface module as an example, it should include RJ-45 module, GBIC module, SFP module, 10Gbps module, etc. to meet the needs of complex environment and network applications in medium and large networks.

Layer 4 Switching

Layer 4 switching is used to achieve fast access to network services. In Layer 4 switching, the decision of transmission is not only based on MAC address (Layer 2 bridge) or source/destination address (Layer 3 routing), but also includes TCP /UDP (Layer 4) application port numbers, and is designed for high-speed Intranet applications. Layer 4 switches support transport flow control based on application type and user ID, in addition to load balancing capabilities. In addition, the Layer 4 switch is placed directly on the front end of the server, and it knows the application session content and user privileges, making it an ideal platform for preventing unauthorized access to the server.

Modular Redundancy

Redundancy capability is a guarantee of secure network operation. No vendor can guarantee that its products will not fail in the course of operation. The ability to switch quickly when a failure occurs depends on the redundancy capability of the device. For core switches, important components should have redundancy capabilities, such as management module redundancy, power supply redundancy, etc., so as to ensure stable network operation to the maximum extent.

Routing Redundancy

Using HSRP and VRRP protocols to ensure load sharing and hot backup of core equipment, when one of the core switches and dual convergence switches fails, the three-layer routing equipment and virtual gateway can be switched quickly to achieve redundant backup of dual lines and ensure the stability of the whole network.

Leave a comment